There comes a moment in every adult’s life when a cashier looks at your driver’s license, squints, and says, “Oh wow,” in a tone normally reserved for archaeological discoveries. You can feel it happen in real time. Your transition from “person buying toothpaste” to “relic of generations past.”

You want to ease the tension. Maybe say something like, “Relax, I wasn’t alive for the signing of the Magna Carta or anything,” usually followed by an anxious laugh, but you also don’t want to sound defensive, which is exactly how old people sound when they insist they are not, in fact, old.

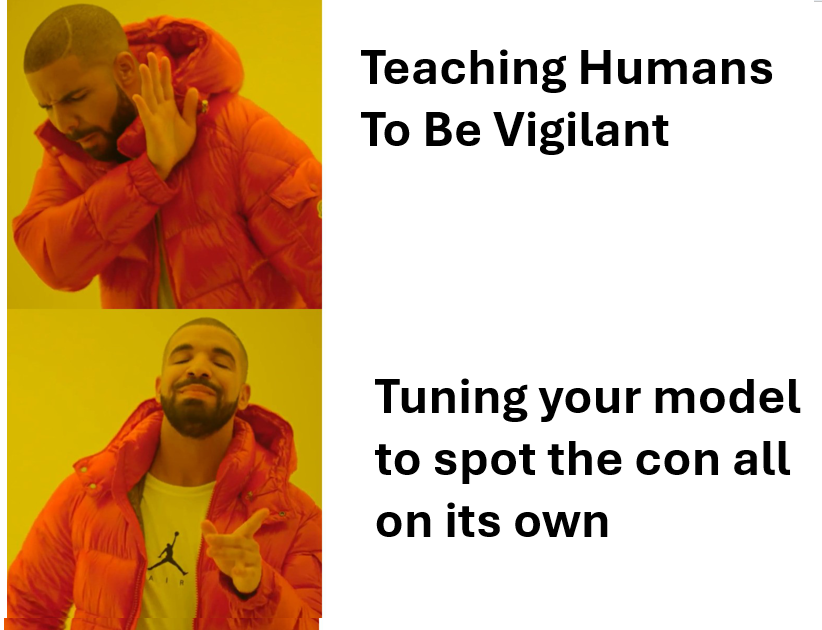

Ageism is less like a sharp insult and more like a slow leak. No one comes up to you and says, “Hello, I believe you are past your prime.” Instead, they ask if you’ve ever used TikTok, as if it’s a complex piece of farm equipment. They speak to you about technology in the same tone you’d use to explain a toaster to a golden retriever.

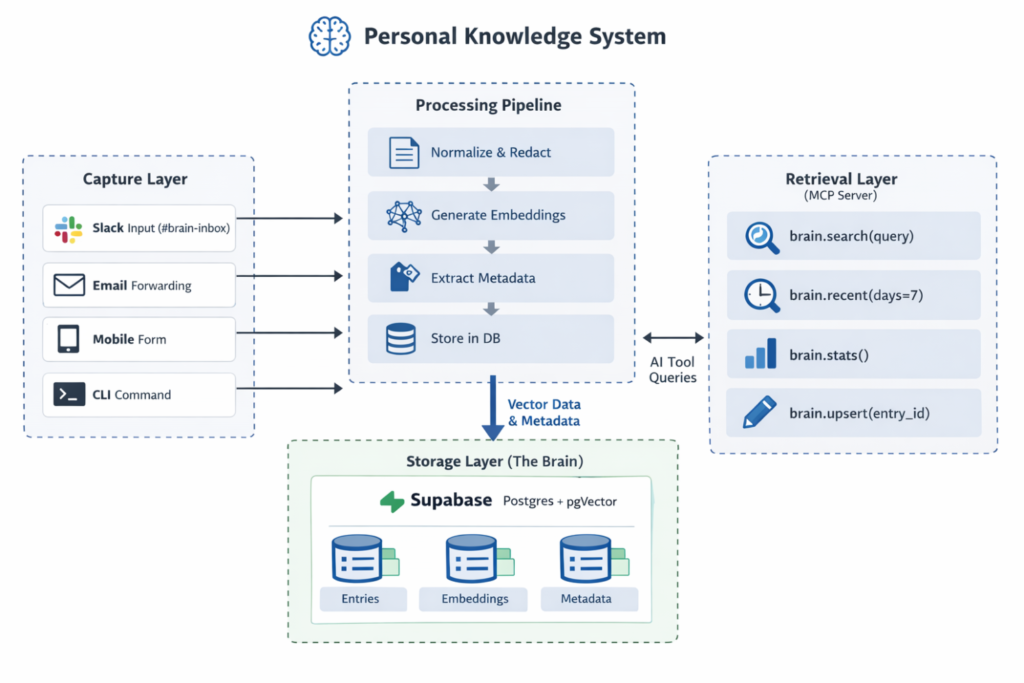

“You just tap the screen,” they say, nodding encouragingly, as though you might eat the phone if left unsupervised. And nevermind the fact that you build data pipelines and apps and stuff and could run circles around them when it comes to such things. You are old, now. You just don’t know.

I noticed it first at the gym, which I joined in a fit of optimism / delusion. The place is full of young people who move with reckless abandon, as if they have not nor will ever throw out their back from sneezing. They wear clothes that seem assembled from optimism and elastic, while I wear something functional that could double as grocery store trip efficient or yard work enablement. One of the trainers approached me with the concerned indifference one might approach a confused man at a bus station.

“Do you need help with that machine?” he asked. I was standing next to a water fountain.

“No,” I said. “I’m hydrating.” He smiled the way you smile at a child who insists the moon is following them.

“Great job,” he said. “Keep up the good work!”

Ageism is often disguised as kindness, which makes it harder to protest without seeming ungrateful. If someone calls you incompetent outright, you can argue. But when they say, “Take your time,” while guiding your hand away from a touchscreen, you’re left wondering if perhaps you should take your time. Maybe time is all you have now. Maybe time is, in fact, your whole personality.

The workplace, of course, has its own delicate choreography. There is a fun kind of horror in being described as “experienced.” It sounds like a compliment until you realize it is the corporate equivalent of saying, “This yogurt is almost past the sell by date.” You’re invited to meetings where your role is to provide historical context: somewhat more polite version of “Tell us about the time before Wi-Fi, grandfather.”

Meanwhile, younger colleagues speak in acronyms and young-ish vernacular that seem to have been generated by a malfunctioning robot fed by reddit data. “Betttt no cap fr fr on God I’m literally highkey lowkey mid but also slaying, it’s giving delulu main character energy, periodt, like why is it kinda bussin but also hella sus, I’m crying screaming throwing up 💀✨,” they say, and you nod, pretending that you are not searching for a way to jump out of the nearest window.

You resist the urge to say, “In my day,” because you know that phrase is the conversational equivalent of pulling the emergency brake on a train, and also because saying such things only calls attention to the fact that it is no longer Your Day. But you do it anyway, because in my day, people could say things like that.

And yet, for all its absurdities, ageism carries an aloof cruelty. It suggests that value is something that peaks early and then declines. Like a banana left too long on the counter, it assumes that curiosity has an expiration date, that adaptability is the exclusive property of the young, and that wisdom is merely a consolation prize for no longer being invited to certain parties.

Aging is the one club that, given enough time, everyone joins. The same people who explain phrases like “no cap” to you as though you are a particularly slow student will one day find themselves squinting at a screen, wondering why everything has become so small and so fast and so inexplicably smug. They, too, will be told they are “brave” for attempting to keep up.

I sometimes imagine a future in which ageism is treated the way we now treat other outdated prejudices: with a mixture of embarrassment and disbelief.

“Can you believe they used to assume people over forty couldn’t learn new things?” someone will say, shaking their head, while an eighty-year-old programs a quantum computer or, more likely, tries to figure out why the remote control has seventeen buttons that all seem to do the same thing.

Until then, we muddle through, collecting small indignities like souvenirs. We laugh about them, because laughter is cheaper than therapy and easier than confronting the fact that time is, in fact, passing. We dye our hair or don’t, download the app or don’t, correct the cashier or let them marvel at our age like we are a rare and possibly endangered species.

And occasionally, we surprise ourselves. We learn something new, move a little faster than expected, understand a joke that was not, technically, meant for us. In those moments, age feels less like a verdict and more like a strange, ongoing experiment in which the results are not yet in, no matter what anyone at the gym might think.

“Great job,” the trainer said again as I finished drinking my water.

“Thank you,” I replied, because at a certain point, you take your victories where you can get them.