One of the great lies of modern professionalism is that we are “multitaskers.” We say it with pride, as if it’s a medal we’ve pinned to our own lapel. I once put it on a performance review. “Highly capable of multitasking.” What I meant was: I can be equally mediocre at twelve things at once.

The real struggle, the one no one wants to admit, is context switching.

Unless you’re some kind of Master-ADHD savant who thrives on ricocheting from spreadsheet to Slack to budget deck to compliance form, switching from one thing to another is like trying to change trains while they’re both still moving. You never quite land your footing. You just cling and hope.

Last Tuesday, I opened my laptop to answer a single email. One email. A quick one. The kind where you say, “Yes, that works,” and then you go back to your coffee and your sense of being a capable adult.

Instead, I opened the inbox. Then Slack popped up with a red badge of urgency. I clicked it. Someone had a question about a dashboard. To answer that, I needed to open the dashboard. Which meant logging into the BI tool. Which required a VPN reconnect. Which triggered a security update. Which reminded me I hadn’t finished that documentation. Which led me to open Confluence. Which had a comment thread. Which required context I had written in a Google Doc. Which required context from a meeting. Which required context from a decision made three months ago.

And somewhere in the background, that original email sat there like a patient dog, wondering why I had abandoned it. By the time I returned to the email, I had lost the plot entirely. I could not remember why the answer was “yes.” I had become a professional amnesiac, paid handsomely to forget things I had just remembered.

This is not a personal failing. It is the condition of modern work. We toggle. We jump. We reload. We re-explain. A Harvard study once found that digital workers switch applications over a thousand times a day. I read that and felt oddly relieved. I had assumed I was uniquely defective.

Context switching is exhausting because your brain has to rebuild the world every time you move. Who am I in this window? What project is this? What constraints matter here? What did we already decide? The mental cost isn’t visible, but it is real.

Our AI tools suffer from the exact same problem.

We like to imagine AI as this vast, humming intelligence. And in many ways, it is. It can draft, summarize, code, analyze. But every time we open a new chat window, it wakes up like a brilliant but slightly concussed intern.

“Hi,” it says. “Who are you again?”

We spend the first several minutes re-explaining our role, our project, our constraints, the people involved, the decision history, and the thing we already tried. In essence, it is digital déjà vu all over again. We complain that AI isn’t explosive enough, or that it hasn’t quite transformed our output in the way the headlines promised, and that might be the case in some instance. But it’s beginning to look like the bottleneck isn’t intelligence. Maybe the bottleneck is memory.

The best prompt in the world can’t compensate for an AI that has no idea what you’ve been working on for six months. It can’t intuit the project politics, the prior trade-offs, the buried assumptions. So it does what we do when we lack context: it guesses. And when we switch tools, or switch from one model to another, the amnesia resets. None of them talk to each other. Each one is a sealed jar of memory. You build history in one, and it stays there, like a diary locked in someone else’s desk.

We have recreated our own problem inside our machines.

Just as we were getting comfortable with chatbots, we introduced autonomous agents: the overachievers of the AI world. They don’t just answer questions. They act. They browse. They plan. They execute multi-step tasks.

And what do agents need more than anything? Context. An agent negotiating on your behalf needs to know your budget, your preferences, your past decisions, your relationships, your risk tolerance, and more. Without that, it is little more than a clueless assistant. It can click buttons, but it doesn’t know which ones matter.

Most AI Platforms DO have memory, but they tend to design memory as a way to keep you. If your context lives inside their tool, you’re less likely to leave. It’s clever. It works. Once a system “knows” you, abandoning it feels like moving cities and leaving your entire friend group behind. But the memory doesn’t follow you. Try a new model and you start from zero. The context is trapped.

So as agents become more powerful, the fragmentation becomes more painful. You don’t just lose convenience. You lose capability. An agent without access to your accumulated knowledge is like a lawyer without case files. It can argue eloquently but has no idea what happened last Tuesday. How are you gonna get out of that speeding ticket that way.

We are building a future where AI agents may be central collaborators. But we are starving them of the one thing that makes collaboration real: persistent, shared memory.

Enter: MyBrain.

MyBrain is not another app with a prettier interface and a promise to “organize your life.” It is a persistent memory layer that sits underneath everything you use. Instead of your context being scattered across Slack threads, email chains, dashboards, and half-finished notes, MyBrain captures your thinking as it happens and stores it in a structured, machine-readable way. Every decision, constraint, insight, and stray idea becomes part of a growing, searchable memory that doesn’t reset when you open a new AI window.

What makes it different is portability. The memory doesn’t belong to a single chatbot. It isn’t trapped in one model’s internal recall feature. It lives in a database you control. Any AI tool or agent that can speak the right protocol can access it. So when you switch from one AI to another, you’re not starting over. The tool changes, but the memory stays the same. That’s the persistent memory problem solved: context stops evaporating and starts compounding.

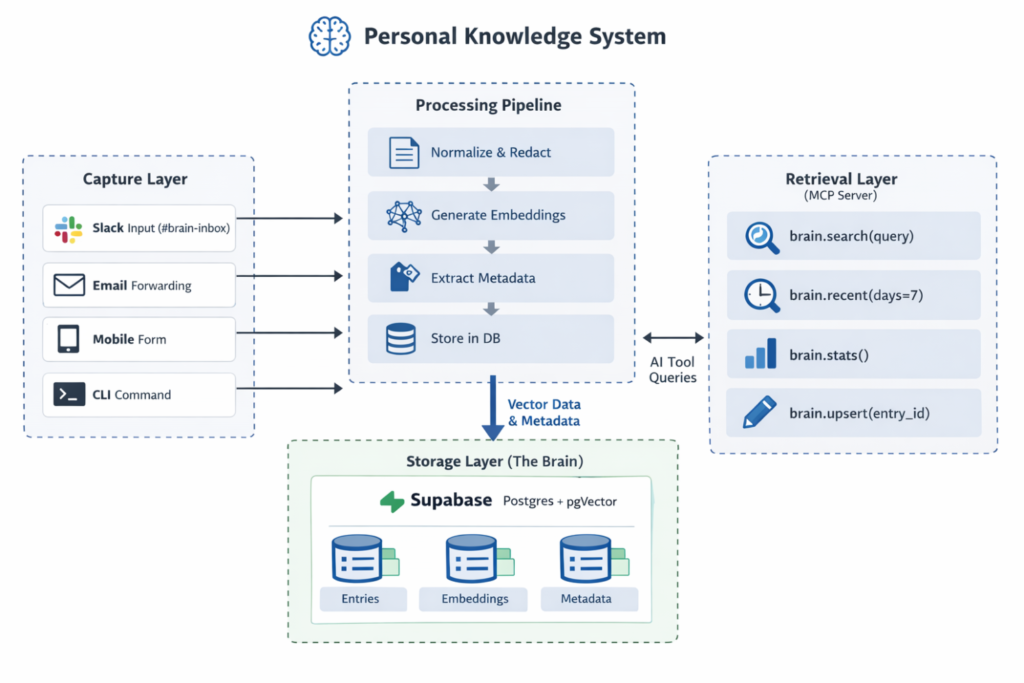

Building MyBrain means assembling dependable parts in the right order. At the core is a Postgres database with vector support, which allows thoughts to be stored not just as text, but as mathematical representations of meaning. When you capture a note, maybe by typing into a dedicated Slack channel, it flows through a lightweight processing layer that cleans the text, generates an embedding, extracts basic metadata (people, topics, action items), and writes everything into the database.

On top of that sits a small server that exposes search and retrieval tools—semantic search, recent entries, simple summaries—through a standard protocol that AI clients can use. That server becomes the doorway into your memory. The result is simple but powerful: capture from anywhere, store in one place, retrieve from any AI. It’s not flashy. It’s infrastructure. But once it’s in place, your work stops feeling like a series of disconnected chats and starts feeling like a continuous conversation.

To see the advantage of this approach, imagine two professionals. Person A opens an AI tool, re-explains their situation, gets a decent answer, and moves on. Next week, they do it again. The week after, again. Their AI is always starting from zero. Person B has a persistent memory layer. Every thought captured. Every decision logged. Every meeting debriefed. Every constraint stored. When they ask a question, the AI already knows the backstory.

Same model. Different outcomes. The difference compounds. Each captured insight makes the next query smarter. Each logged decision reduces ambiguity. Over time, the AI stops feeling like a party trick and starts feeling like a colleague who has been in the room for every meeting. When you design memory for machines, you often improve it for yourself. You become clearer. More deliberate. You stop relying on “I think we decided…” and start saying, “We decided this, on March 3rd, because of X.” Clarity for agents becomes clarity for humans.

The gap in the coming decade won’t just be between people who use AI and people who don’t. It will be between those who treat AI as a clever chatbot and those who treat it as a long-term collaborator. Collaboration requires shared context. Shared context requires architecture. Architecture requires intention.

If context switching is the great cognitive tax of our era, then persistent memory is the antidote. For us. For our tools. For the agents we are increasingly trusting with real work. Somewhere in all of this, that original email is still waiting to be answered. But if I had a system that remembered what I was doing before Slack blinked at me, I might just get to it in one pass.

And that, in 2026, feels like a minor miracle.